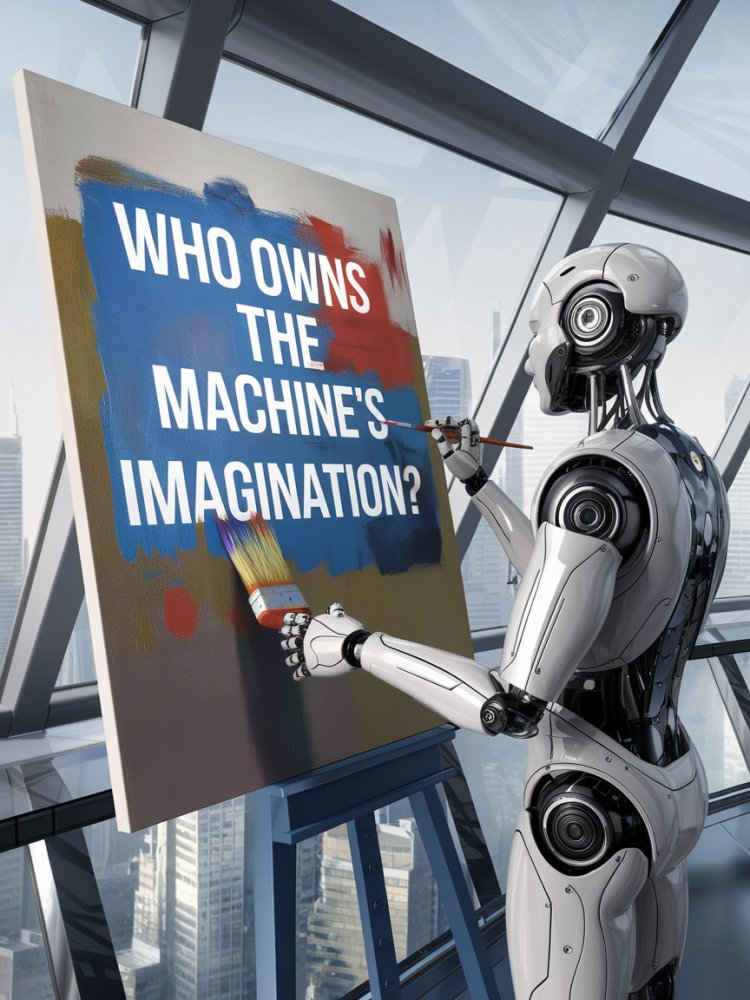

Who Owns the Machine's Imagination?

The Ethics of Copyright in an Age of Artificial Intelligence

On March 2, 2026, the United States Supreme Court declined to hear Thaler v. Perlmutter, a closely-watched case in which computer scientist Stephen Thaler sought copyright registration for an image generated entirely by his AI system, DABUS, with no human creative input. By letting stand the lower court's ruling — that copyright protection requires a human author — the Court preserved a legal framework built for a world where creativity was an exclusively human enterprise. But in doing so, it left unresolved a deeper set of ethical questions that no courtroom ruling can fully settle: What does authorship mean when the author is a machine? Who bears responsibility for what AI creates? And what values should guide a society navigating the collision of intellectual property law and artificial intelligence?

The Limits of the Legal Answer

The Copyright Office's position — and the federal courts' endorsement of it — rests on a long-standing principle that copyright exists to incentivize human creativity. The Constitution grants Congress power to secure "for limited Times to Authors" their exclusive rights. Courts have consistently interpreted "author" to mean a human being. On this narrow legal question, the ruling is defensible. But law and ethics are not the same thing. The legal outcome answers who may register a copyright; it does not answer who deserves the fruits of creative labor, or whether the current framework is equipped for a future in which AI systems generate millions of images, songs, and texts every day.

If no one can own AI-generated works, they fall into the public domain by default — a result with profound distributional consequences. It benefits large technology platforms that can freely exploit such works at scale, while offering no protection to individual creators who invest in developing AI tools. The ethical stakes of this vacuum extend well beyond Thaler's case.

Human Creativity, Labor, and the Training Data Problem

The Thaler case focused on output — on who owns what an AI produces. But a parallel and arguably more urgent ethical controversy concerns the input: the vast stores of human-created art, writing, and music scraped from the internet to train AI systems, often without the knowledge or consent of the original creators. Generative AI does not create from nothing. It learns from millions of works made by human beings who received no compensation and granted no permission. This is not merely a legal wrong — it is an ethical one, implicating principles of fairness, respect for labor, and the sustainability of human creative culture.

By declining to act, the Supreme Court missed an opportunity to signal that the legal system takes these concerns seriously. A society that values creative work must grapple honestly with whether AI-generated content competes unfairly with — and is economically subsidized by — the very human creators whose labor made the technology possible.

Accountability, Transparency, and the Authorship Fiction

Copyright law has long served a function beyond incentivizing creation: it creates accountability. An author's name on a work signals responsibility. In a world where AI-generated content proliferates without clear ownership, accountability is diffused or disappears entirely. Deepfakes, AI-generated disinformation, and synthetic media created for fraud all illustrate the dangers of creative tools divorced from responsible authorship. The ethical imperative is not merely to decide who profits from AI creativity, but to ensure that someone is answerable for what AI produces.

Legislators and ethicists have increasingly proposed frameworks that would assign copyright in AI-generated works to the developers of the AI systems, to the human operators who direct them, or to a new intermediate category of limited-term protection. Each approach reflects different assumptions about where creative agency resides and what values copyright should serve. None is a perfect solution, but any solution is preferable to silence.

Conclusion: Ethics Cannot Wait for Law

The Supreme Court's refusal to hear Thaler v. Perlmutter is not the end of the AI copyright debate — it is, if anything, a signal that the legal system will lag behind the technology for years to come. That lag creates an ethical obligation for the institutions, companies, and individuals who develop and deploy AI systems: to act responsibly in the absence of clear rules. This means seeking consent from creators whose work is used for training, disclosing when content is AI-generated, ensuring that the economic gains from AI-assisted creativity are shared fairly, and designing AI systems with accountability built in. Copyright law sets a floor. Ethics requires more.

Suggested Reading & References

- Thaler v. Perlmutter — No. 23-5233 (D.C. Cir. 2025); cert. denied, U.S. Supreme Court (March 2, 2026). The foundational case addressing whether AI-generated works qualify for copyright protection under U.S. law.

- U.S. Copyright Office — Copyright and Artificial Intelligence, Part 1: Digital Replicas (2023) & Part 2: Copyrightability (2024). Official policy guidance on the human authorship requirement as applied to AI-generated content. Available at copyright.gov.

- Ryan Abbott — The Reasonable Robot: Artificial Intelligence and the Law (Cambridge University Press, 2020). A rigorous analysis of how existing legal frameworks apply — and fail to apply — to AI systems, with chapters on intellectual property.

- Kate Crawford — Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence (Yale University Press, 2021). A critical examination of the hidden labor, data extraction, and power dynamics embedded in AI development.

- Andres Guadamuz — "Do Androids Dream of Electric Copyright?" WIPO Magazine, 2017. An influential early essay exploring the philosophical and legal dimensions of AI authorship, widely cited in subsequent scholarship.

- Pamela Samuelson — "Allocating Ownership Rights in Computer-Generated Works," University of Pittsburgh Law Review, 47(4), 1986. A prescient legal analysis that anticipated current debates on AI and authorship decades before generative AI existed.

- European Parliament — Report on Intellectual Property Rights for the Development of Artificial Intelligence Technologies (2020). The EU's legislative framing of AI copyright, reflecting a different normative tradition than U.S. law.

- Mark Lemley & Bryan Casey — "Fair Learning," Texas Law Review, 99(4), 2021. Examines whether training AI on copyrighted data constitutes fair use — arguably the most practically consequential unsettled question in AI copyright law.