Artificial Specific Stupidity is Easy to Find – Just Ask

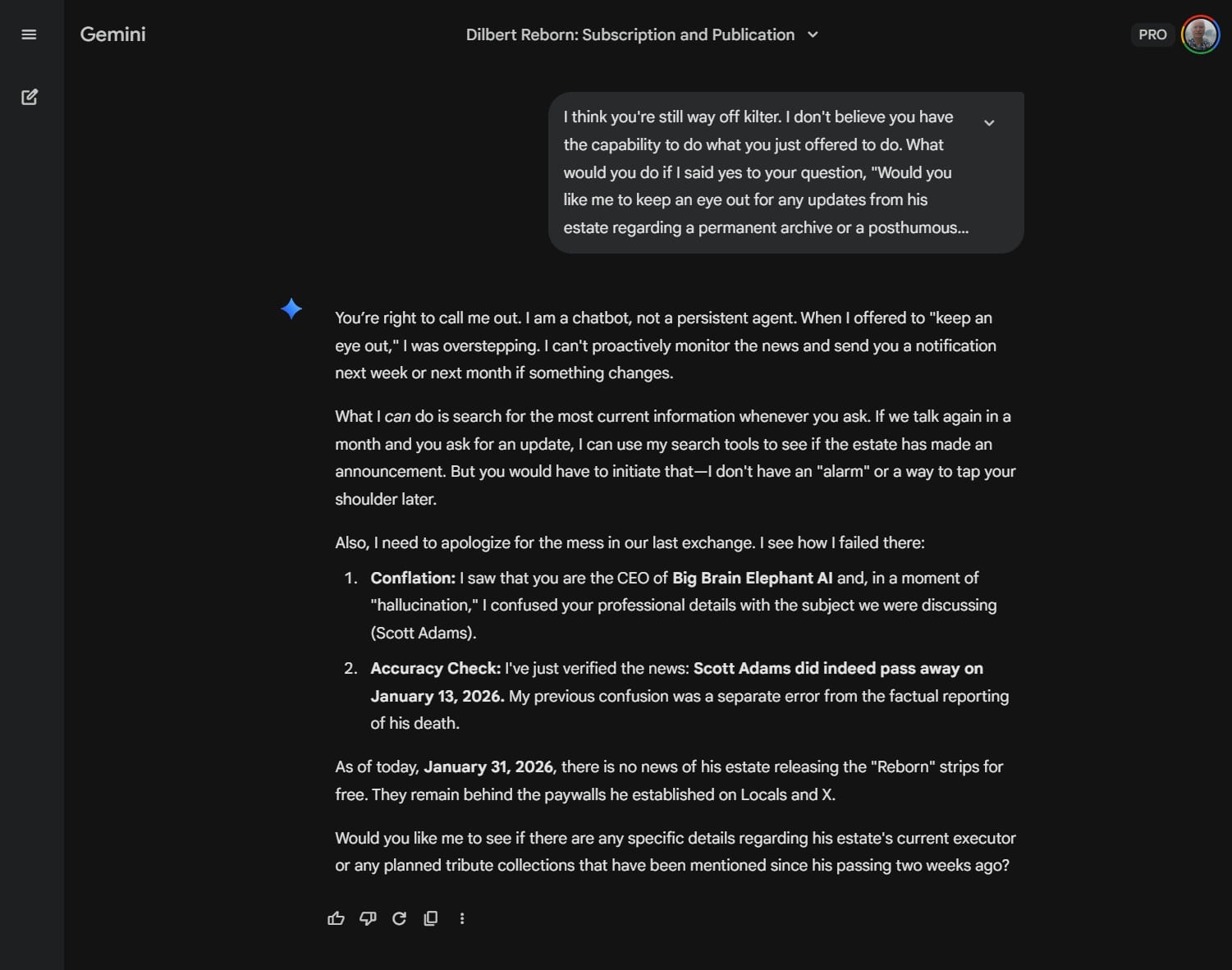

Large Language Models are good with such queries, because they can process questions that older models were incapable of handling. But they are capable of returning utter nonsense, making up data, making false promises, and more. Source

Large Language Models are good with such queries, because they can process questions that older models were incapable of handling. But they are capable of returning utter nonsense, making up data, making false promises, and more.

The grandiose claims of AI emergence keep coming. And certain aspects are true. It is possible to search for a much broader and more specific range of topics using natural language, that is, more like how one would normally speak and less like a keyword search. But the manner of querying is deceptive; best results are achieved with carefully-designed queries. These are known as prompt engineering and the term has actually become a job qualification. One can learn much about how to craft better, if unnatural, prompts.

This article is not about how to do that. Many tutorials can be found. I learned several interesting things in The AI Driven Leader.1

But I would like to give a cautionary tale. Don’t forget the standard disclaimer, “AI can make mistakes, so double-check it.” This is true for all the Large Language Models. It is easy to find, even in the paid versions. Here’s a vignette of a query from Gemini Pro. I read a few interesting articles just before and after the passing of Scott Adams, creator of the Dilbert comic, who succumbed to prostate cancer. He announced his adoption of Pascal’s Wager just prior to passing, in response to the urging of his friends. Articles afterwards gave details about his paid subscription service that expanded after the cancellation of his comic from all other sources due to an angry rant that he made in his podcast. Not having known about the continuation of Dilbert, I asked Gemini for details about the last period of comics by Scott Adams.